Can Portland Stop Tech Companies from Using Your Face?

Portland city council members are prepping a first-of-its-kind ban outlawing the use of facial recognition software in all public spaces by private entities, as well as by the city government and local police. Under the proposed law, not only would local law enforcement be banned from collecting your biometric data, so would big retailers and financial institutions.

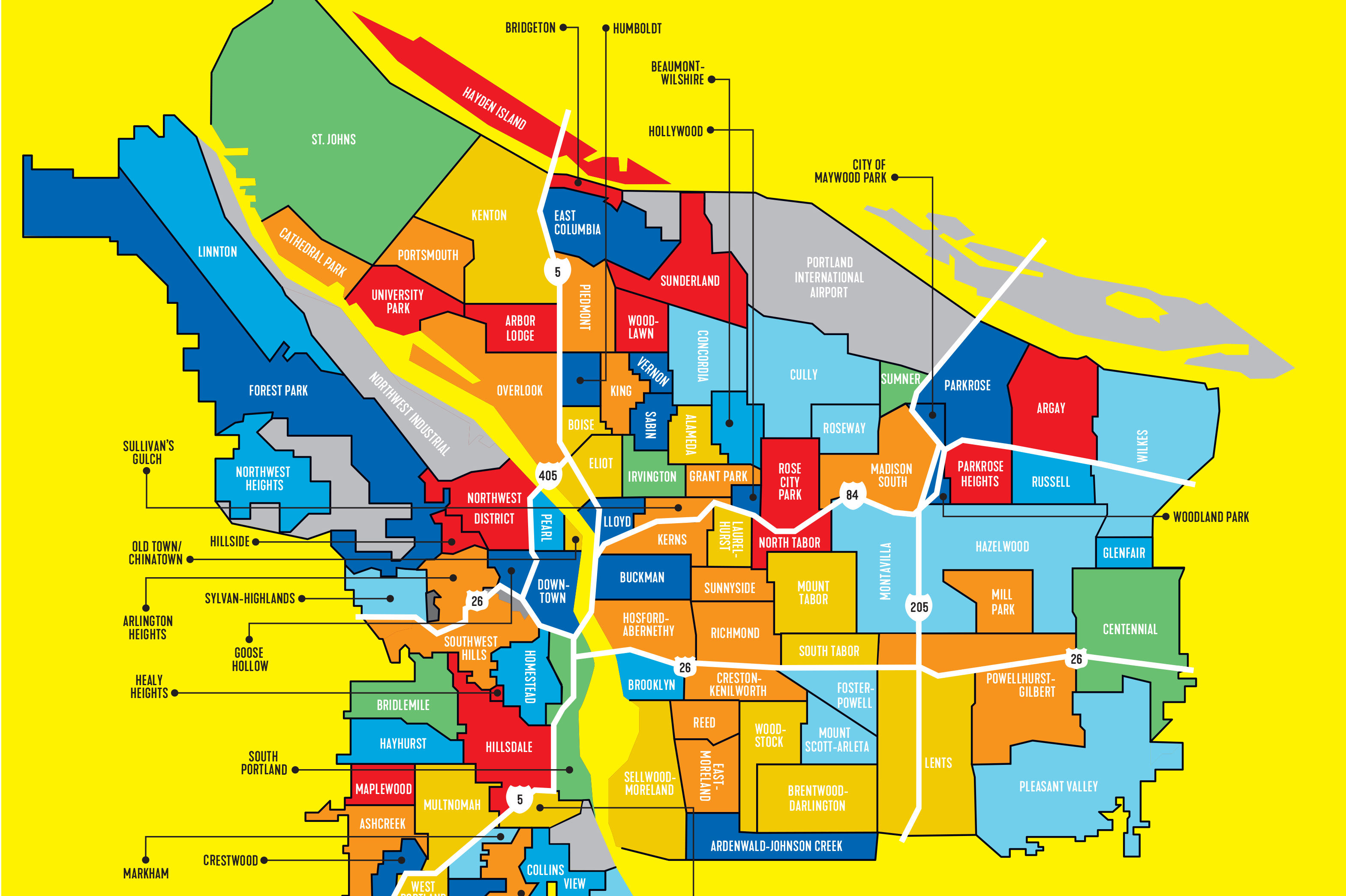

Image: Brian Breneman

Stroll through any leafy Portland neighborhood, and unbeknownst to you, video-equipped smart doorbells and security cameras could be capturing and storing pictures of your face.

There’s no federal regulation of facial recognition technology. Thus, there are no clear limits on who can build biometric gathering systems, no standards for accuracy, and no best practices to apply. Private companies have leapt into the void, assembling vast photo databases with images scraped from the internet; their algorithms can instantly match an image from a public surveillance camera with photos in their database and spit out an ID.

Their customers span law enforcement agencies, from local police to the FBI, and also include retail stores looking to nail shoplifters and recruiting firms weeding out job candidates.

Now, Portland city council members are prepping a first-of-its-kind ban outlawing the use of facial recognition software in all public spaces by private entities, as well as by the city government and local police. Under the proposed law, not only would local law enforcement be banned from collecting your biometric data, so would big retailers and financial institutions.

Social justice and civil rights organizations, including the Urban League and Verde, lobbied for the all-encompassing ban in Portland, citing a need to build regulations before corporations see potential profits and attempt to block such limitations.

“Good government and good governance do not wait to hear horror stories from its people about the ills that exist within its purview,” Darren Harold-Golden, a policy specialist with the Urban League, testified to the city council. “Instead, it is proactive in its approach to harmful issues so that people will not have horror stories to tell.”

Proponents of facial recognition technology say it can help screen out bad actors before they pull out a gun at a school, along with the more pedestrian goal of helping brands target consumers. But a potential surveillance state under capitalism has the potential—and historical precedent—to unjustly target marginalized people.

According to a 2019 study from the National Institute of Standards and Technology, which compared 189 algorithms from 99 developers, the average FRT system regularly misidentifies people of color and women, which can lead to false arrests, denial of access to businesses, and denial of financial services.

While facial recognition algorithms are evolving, starting off with racial inequality doesn’t sit well with City Commissioner Jo Ann Hardesty.

“This is about racial justice and our right to privacy,” said Hardesty during a January 28 work session on the issue. “This is about whether or not we will allow companies that have a financial motive to gather our information and sell it and utilize it in a way that is detrimental to our community.”

The proposed ban drew some light pushback from Portland’s business and technology communities.

“Technology is not inherently good or bad,” Jon Isaacs, vice president of government affairs at Portland Business Association, told city council members. “It has specific potential uses which can be problematic and may need to be regulated.”

Both Isaacs and Skip Newberry, president and CEO of the Technology Association of Oregon, suggested that a ban could signal to companies looking to relocate in the Portland area that the city is not tech-friendly.

Hardesty and the rest of the city council are undeterred, though they say they’re open to future tweaks.

“We see these [ordinances] as a ban but technology is going to keep moving forward,” says Hector Dominguez, an open data coordinator with Smart City PDX. “We want to create a space for the city to develop internal capacity to handle coming technological challenges around facial recognition technology and artificial intelligence.”